My friend Michael J. Swart has a rule of thumb he calls Swart’s Ten Percent Rule.

If you’re using over 10% of what SQL Server restricts you to, you’re doing it wrong.

After a recent discussion on Twitter, I wondered what it would look like if I had 32,767 databases on one instance of SQL Server (that’s the hard limit according to the documentation).

This is a Very Bad Idea. Don’t do this in a production environment. I performed this experiment on a Docker container running SQL Server 2019 Preview CTP 2.0 so that you don’t have to.

Initial plan

The first problem was the initial size of each new database. Despite putting the model database in the simple recovery model and shrinking it as small as I could (2 MB for the data file and 512 KB for the transaction log), the default size of a new database on SQL Server these days is 8 MB, no matter how much we try to shrink it afterwards.

In the second attempt, I considered simply copying the model database files 32,767 times, and mounting them separately. In the words of kids these days, “L-O-L.”

Initial result

In any event, none of this worked very well. I got about as far as I expected to (just over 3,200 databases created, proving out Swart’s Ten Percent Rule) before the instance ran out of memory. It sputtered a little before eventually failing. I was jumping between SQL Server Management Studio and Azure Data Studio respectively to see what was going on.

Next steps

After a quick sudo docker rm followed by sudo docker run to recreate the container, I thought about this “problem” again.

The minimum database size of 8 MB doesn’t help my home lab’s disk space situation. I would need 256 GB for the data files and another 16 GB for the transaction logs, and who has that kind of time to set up a large enough virtual machine or container?

Joey D’Antoni noted that it’s only really feasible to have so many databases if auto-close is enabled on all of them. I especially like this tweet because it is a subtweet. I can still feel Joey’s eyes judging me, even now.

While I believe this is cheating to have the databases simply registered in the master database as opposed to having them open, there’s a point where common sense is necessary.

Who needs roads rules?

Not one to be defeated by sensible thinking and resource constraints, I decided to push ahead with a new plan.

In my third attempt, I figured that cheating was indeed allowed. I would create a new database, set it offline, and delete the underlying files. This would eliminate my storage requirements beyond around 10 MB at a time. To make things interesting, I broke this process up into four separate scripts, with a range of 8192 databases in each script, and let it run.

What’s the worst that could happen?

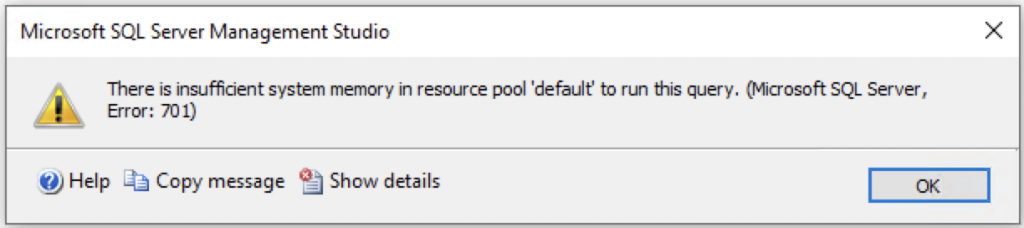

While I believe that pictures paint a thousand words, this blog post is already up around 1,000 words, so here’s the actual error message:

Msg 1835, Level 16, State 1, Line 3 Unable to create/attach any new database because the number of existing databases has reached the maximum number allowed: 32765.

“But Randolph,” I hear you cry, “that doesn’t say 32,767.” You are correct, dear reader. In fact, this number is two databases short of the maximum number allowed on a SQL Server instance, according to the official documentation. What gives?

The first of the two missing databases is the resource database, a read-only database containing all of the system objects in SQL Server.

The second is reserved for SQL Server replication, whether it be the publisher, distributor or subscriber database.

If you want to play along at home, here’s the script I used.

The script, which you absolutely should not use in a production environment

Note that while I successfully performed the experiment on a Dockerized version of SQL Server 2019 Preview CTP 2.0, the script below is designed for a Windows platform because it incorporates xp_cmdshell. Extended stored procedures are not supported on SQL Server on Linux (which includes Docker containers), and my bash script with sqlcmd hackery should never see the light of day.

-- Copy this whole script to four different SSMS windows and adjust the @top and @base values

-- accordingly.

--

-- Be advised that this may cause SSMS to crash if all four are run at the same time, so maybe

-- save the four scripts to the file system and run them from the command line using sqlcmd -i.

--

-- This script is free of copyright and may be used for any purpose, but it is not designed for

-- a production environment.

--

-- ** Don't do anything that could be perceived as a career-limiting move. **

--

USE master;

GO

SET NOCOUNT ON

DECLARE @base INT = 0;

DECLARE @top INT = 8192;

--DECLARE @base INT = 8192;

--DECLARE @top INT = 16384;

--DECLARE @base INT = 16384;

--DECLARE @top INT = 24576;

--DECLARE @base INT = 24576;

--DECLARE @top INT = 32768;

DECLARE @id INT = @base;

DECLARE @db NVARCHAR(10);

DECLARE @sql NVARCHAR(MAX) = N'';

DECLARE @path NVARCHAR(255) = N'C:\Program Files\Microsoft SQL Server\MSSQL15.MSSQLSERVER\MSSQL\DATA';

DECLARE @deldata NVARCHAR(255) = N'';

DECLARE @dellog NVARCHAR(255) = N'';

WHILE (@id < @top)

BEGIN

SET @db = FORMAT(@id, '00000');

SET @sql = N'CREATE DATABASE [' + @db + N']

ON PRIMARY (NAME = N''' + @db + N''', FILENAME = N''' + @path + N'\' + @db + N'.mdf'', SIZE = 2MB, FILEGROWTH = 0KB)

LOG ON (NAME = N''' + @db + N'_log'', FILENAME = N''' + @path + N'\' + @db + N'_log.ldf'', SIZE = 512KB, FILEGROWTH = 0KB);

ALTER DATABASE [' + @db + N'] SET OFFLINE;';

--PRINT @sql;

EXEC sp_executesql @sql;

SET @deldata = N'DEL "' + @path + N'\' + @db + N'.mdf"';

SET @dellog = N'DEL "' + @path + N'\' + @db + N'_log.ldf"';

--PRINT @deldata;

--PRINT @dellog;

EXEC sys.xp_cmdshell @deldata;

EXEC sys.xp_cmdshell @dellog;

SET @id = @id + 1;

END;

SET NOCOUNT OFF;

On my home lab, this took just over an hour to complete.

Here’s a script to blow them all away if you don’t want to destroy your container, or are running this on Windows. It took approximately 5 minutes on my home lab.

DECLARE @id INT = 0;

DECLARE @db NVARCHAR(20) = N'';

WHILE @id < 32768

BEGIN

SET @db = FORMAT(@id, '00000');

EXEC ('DROP DATABASE [' + @db + ']');

SET @id = @id + 1;

END;

Summary

If there are any limits of SQL Server you would like me to test next, feel free to leave a comment below and I’ll see about doing an experiment.

Photo by Trym Nilsen on Unsplash.